Densely Connected Convolutional Networks by Gao Huang, Zhuang Liu, Kilian Q. Weinberger, Laurens van der Maaten

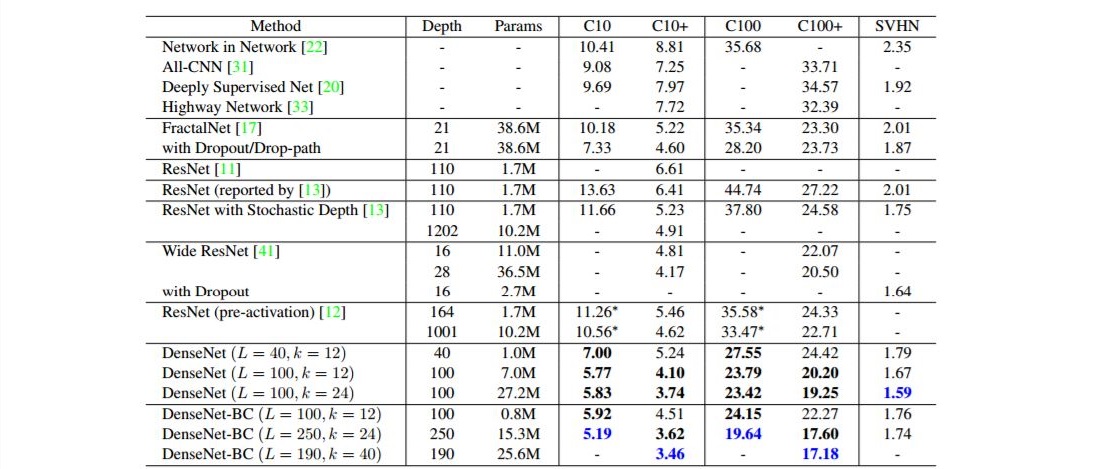

Recent work has shown that convolutional networks can be substantially deeper, more accurate, and efficient to train if they contain shorter connections between layers close to the input and those close to the output. In this paper, we embrace this observation and introduce the Dense Convolutional Network (DenseNet), which connects each layer to every other layer in a feed-forward fashion. Whereas traditional convolutional networks with L layers have L connections - one between each layer and its subsequent layer - our network has L(L+1)/2 direct connections. For each layer, the feature-maps of all preceding layers are used as inputs, and its own feature-maps are used as inputs into all subsequent layers. DenseNets have several compelling advantages: they alleviate the vanishing-gradient problem, strengthen feature propagation, encourage feature reuse, and substantially reduce the number of parameters. We evaluate our proposed architecture on four highly competitive object recognition benchmark tasks (CIFAR-10, CIFAR-100, SVHN, and ImageNet). DenseNets obtain significant improvements over the state-of-the-art on most of them, whilst requiring less memory and computation to achieve high performance. Code and models are available at this https URL .

From the main implementation page at: https://github.com/liuzhuang13/DenseNet

"..Other Implementations

- Our Caffe Implementation

- Our (much more) space-efficient Caffe Implementation.

- PyTorch Implementation (with BC structure) by Andreas Veit.

- PyTorch Implementation (with BC structure) by Brandon Amos.

- MXNet Implementation by Nicatio.

- MXNet Implementation (supporting ImageNet) by Xiong Lin.

- Tensorflow Implementation by Yixuan Li.

- Tensorflow Implementation by Laurent Mazare.

- Tensorflow Implementation (with BC structure) by Illarion Khlestov.

- Lasagne Implementation by Jan Schlüter.

- Keras Implementation by tdeboissiere.

- Keras Implementation by Roberto de Moura Estevão Filho.

- Keras Implementation (with BC structure) by Somshubra Majumdar.

- Chainer Implementation by Toshinori Hanya.

- Chainer Implementation by Yasunori Kudo.

- Fully Convolutional DenseNets for segmentation by Simon Jegou...."

No comments:

Post a Comment